A/B testing tools were some of the first software to incorporate AI into their platforms in a meaningful way. And they did it long before LLMs and AI agents were the hot topic.

So while many tools have slapped “AI-powered” onto their marketing materials (without actually improving their product), good AI A/B testing platforms have legitimately changed the way that teams run experiments.

But these new capabilities have important limits and introduce new risks. This post provides hype-free coverage of what these tools can do today.

AI A/B Testing: Overview

I’m assuming you are already familiar with A/B testing and how it works: form a testable hypothesis, build a controlled experiment, split your traffic, measure performance, and find a winner. If you need a refresher, start with our guide to A/B testing basics, because we’re just going to jump right in.

AI A/B testing refers to experimentation platforms that use artificial intelligence to assist with different stages of the testing process, helping teams to:

- Run more tests with less manual work

- Accelerate the pace of experimentation

- Test more complex ideas without writing code

- Serve more personalized experiences during testing

- Automatically document learnings from past tests in a searchable record

The net effect is that a full-fledged A/B testing program is now within reach for teams that don’t have dedicated copywriters, designers, and developers.

Of course it’s ideal to have those resources, but AI A/B testing tools can help close skill gaps by generating copywriting, code, and CRO audits instantly.

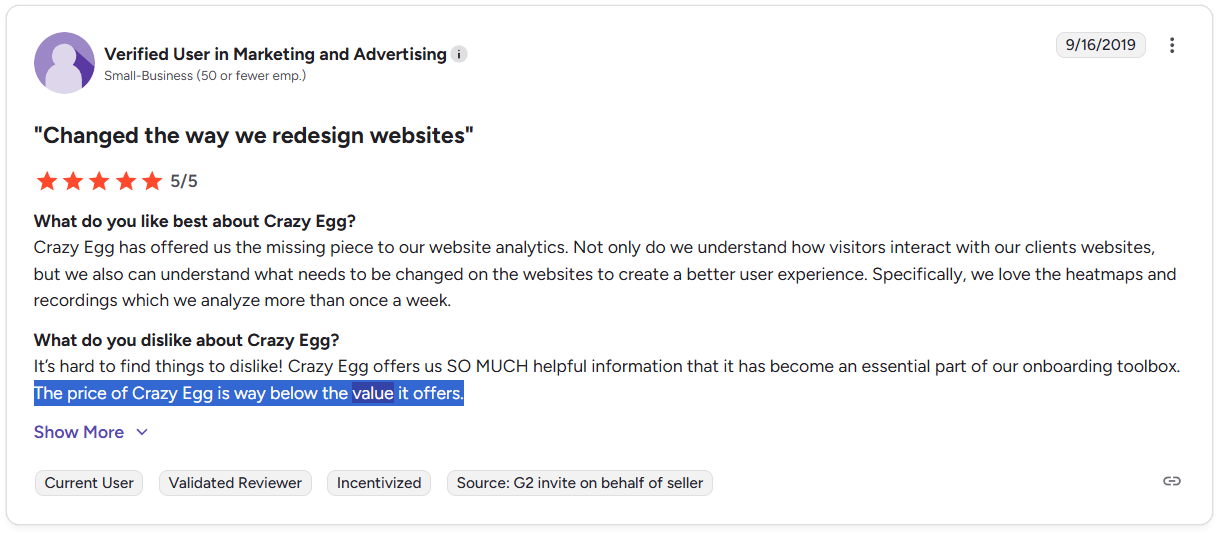

These are real capabilities that exist today. Teams are already using these tools with documented success.

Why AI works for A/B testing

I understand if you are skeptical. There is an ungodly amount of hype around AI, and plenty of brands are overselling what their products can do.

But A/B testing is genuinely well-suited to benefit from AI.

During a test, every interaction gets recorded. Every test tells you what works and what doesn’t. There’s no ambiguity about what success looks like.

Unlike a lot of other mushier marketing problems, A/B testing provides a near ideal environment for AI to learn.

And on top of that, you have these newer generative AI tools that can produce the copy, images, designs, and code you need to run the next test.

You can learn more, and act on what you learned faster.

AI in A/B testing is not new

Most of the popular A/B testing tools already incorporate machine learning (ML, a subset of AI) into their products, and have for years. Some examples:

- Multi-armed bandit testing, which relies on ML algorithms to shift traffic in real-time toward variations that perform better.

- Predictive targeting, which uses ML to predict which users are most likely to convert when shown a particular variation based on behavioral and demographic signals.

- Anomaly detection, which figures out the normal patterns in your data so it can flag issues that don’t look right.

In all of these cases, the platform uses ML to “learn” from incoming data in order to improve results or catch problems early.

This generation of AI-enabled platforms builds on this well-understood and heavily-tested foundation. Unlike many other software products, A/B testing tools are built by people who have a decade or more experience shipping AI features to their customers.

Where is AI A/B testing today?

The clearest way to understand the current landscape is to think about where the human experimenter fits in.

Broadly speaking, AI is being worked into testing programs in three ways:

- Human-led with AI assistance: The experimenter drives every decision about what to test, how to set it up, and how to interpret results, using AI to reduce the manual work at each stage.

- AI-led with human supervision: The platform generates ideas, builds variations, allocates traffic, and interprets results, with humans reviewing inputs, outputs, and analytics.

- Automated testing with human-defined guardrails: The platform runs tests continuously within parameters defined by the human team, optimizing tests without much hands-on management.

Most teams operate somewhere between the first and second paradigm today, but the third is where this technology is heading.

I was not able to find anyone who claimed to have taken the human fully “out of the loop,” though it is now possible to do so, in theory.

The products that offer “automated AI A/B testing” are generally talking about:

- Automating specific parts of the testing process, not the full program.

- Running continuous multi-armed bandit testing, where the platform promotes winners and pauses losers, but there is still human supervision.

If you are curious about the leading edge of automated experimentation, this episode of the Outperform Podcast is great. The discussion focuses on multi-armed bandit algorithms and considers both A/B and multivariate testing, but you will get a good sense of both the rewards and risks of letting tests run on their own.

Today, the typical A/B testing team is still firmly in the driver’s seat. They use AI to automate manual tasks and augment their ability to create impactful tests.

Let’s look at what these tools can help you do.

Existing AI A/B Testing Capabilities: Benefits and Limitations

These five sections cover what you can do with AI A/B testing platforms today:

- Generate test ideas and variations

- Assist with the design and configuration of experiments

- Forecast test impact

- Enhance personalization

- Interpret and summarize results

I’ll walk through these capabilities, highlighting where each one goes beyond traditional tools, how teams are using them, and any important limitations and risks.

We’ll cover a few emerging capabilities separately at the end of the post.

1. Generate test ideas and variations

Most AI A/B platforms include tools to help you generate assets for experiments. You decide what to run, but instead of coming up with ideas or coding the variants manually, you can ask the system to create these for you.

What it can do:

- Scan a page and suggest hypotheses based on the content, target audience, or historic performance.

- Propose new layouts or design changes.

- Generate variations of headlines, copywriting, images, CTA buttons, or offers.

- Revise copy and components for different user segments.

- Create variants or experiment briefs based on prompts.

- Convert natural language requests into simple code or design edits.

- Enable brand controls to guide content creation.

The obvious benefit is speed. Much of the legwork that went into building an A/B test can be automated with generative AI.

These capabilities also make it possible for a single person to create tests that would have previously required a copywriter, designer, and developer working together.

Potential risks and limitations:

- Testing surface-level variations that don’t produce meaningful outcomes .

- Introducing generic “LLM-style” copywriting and images.

- Creating variations that optimize for the test at the expense of brand strategy.

- Building tests that ignore important context like the funnel stage or buyer personas.

By enabling people to do a lot more at a faster pace, there’s a temptation to run more tests rather than better ones. That’s a slippery slope.

I’ve seen claims about AI A/B testing like, “you can set up experiments in five minutes!”

That may be true, but the old saying “garbage in, garbage out,” applies. Humans hate AI-generated marketing content, and letting AI drive the full creative process is likely to lead to poor outcomes.

These capabilities work best when AI handles the manual work and humans handle the thinking. The real value is giving writers, strategists, and designers more time to reinvest in the big-picture goals of the experiment.

2. Assist with the design and configuration of experiments

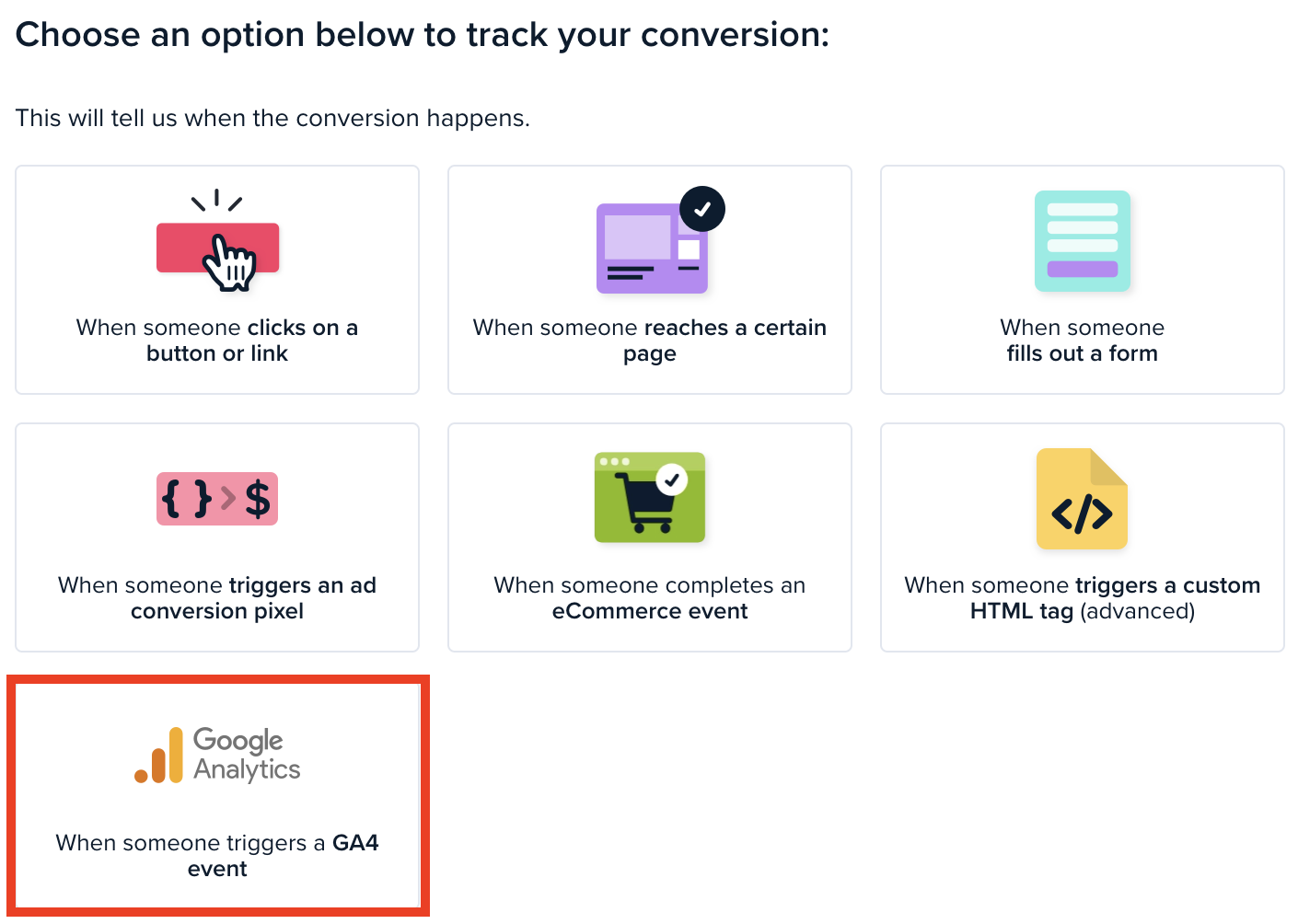

Before an A/B test runs, you have to lock in a few key elements. What is the primary metric that defines success? Are there countervailing metrics we should track so we know we didn’t hurt anything we also care about? How long should the test run?

Traditional A/B testing tools make it fairly easy to select key metrics, estimate sample sizes, and project test durations. AI A/B testing tools can use historical data and pattern recognition to provide additional assistance during set up.

What it can do:

- Convert natural language prompts into test setups (e.g. “Run a test on returning users optimizing for revenue per session”).

- Recommend primary and countervailing metrics based on your goals, page types, and historical data.

- Flag tests with a high probability of being underpowered based on historical data.

For teams running lots of experiments, AI tools can help standardize and streamline the setup process. It’s going to be easier to run a greater variety of tests on a greater number of pages.

They can also help less experienced teams avoid basic errors that waste valuable testing time or hurt the conversion rate, leading to lost sales.

Potential risks and limitations:

- Flawed recommendations stemming from AI reliance on historical data that no longer applies. (e.g. you changed the page last month)

- The platform may select metrics that are easy to measure instead of the ones that reflect your business goal, such as optimizing for clicks instead of revenue.

- Discouraging creative risk, as AI tools may recommend test designs that have worked before rather than bold experiments.

- AI tools may hallucinate, picking metrics or scoring test ideas based on fabricated data.

The risk is that people treat the suggestions as authoritative without questioning whether they line up with the goals of the test. Can you understand and verify the rationale behind the recommendation?

Treating the AI recommendations as inputs to be considered rather than accepting them without scrutiny is the safer play. This protects teams from running over-standardized, cookie-cutter experiments, or running with test ideas that have no basis in reality.

3. Forecast test impact

Some AI tools can help you estimate the likely impact of an A/B test idea before it launches by analyzing data from past experiments.

They can take into account things like page type, audience, the type of experiment (testing headlines vs. pricing changes, for example), and then estimate how the new test might behave.

It’s not perfect or prophetic, but it can give you a sense of which tests are the most promising.

What it can do:

- Estimate probable conversion rate ranges for new experiments

- Rank and prioritize test ideas based on predicted value

- Project potential revenue under current traffic conditions

- Identify test ideas that have underperformed in similar contexts

For teams with a lot of historical experimentation data, these tools can help you make evidence-based decisions about which tests are likely to have a meaningful impact on conversions.

Potential risks and limitations:

- Teams without a lot of historical data will not get useful guidance from these tools.

- If your traffic mix varies or you’ve updated a lot of content, the predictions will be less accurate.

- For split testing novel test ideas or big structural changes, forecasting is unlikely to provide reliable guidance.

These tools are extrapolating from past data, so where the data is thin or the test idea is really different, you can’t expect the predictions to be useful.

4. Enhance personalization

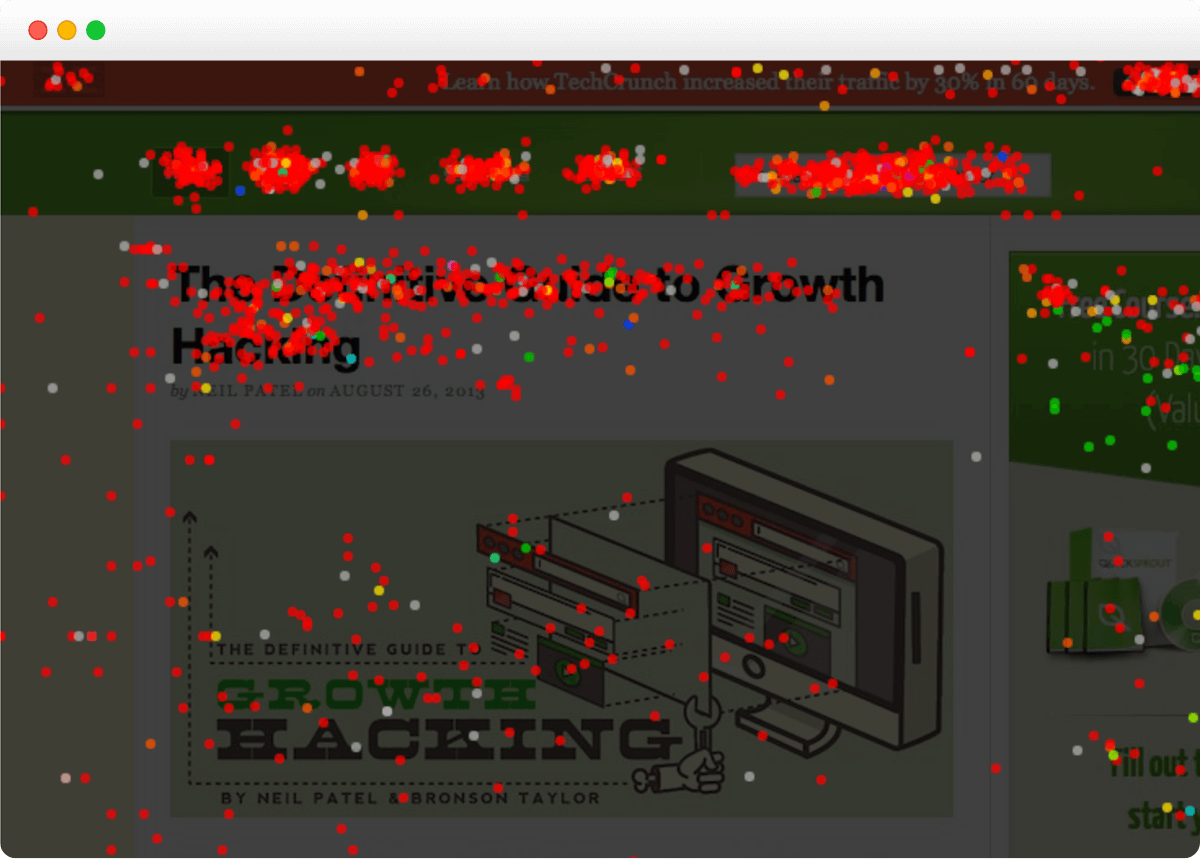

A/B testing tools that use multi-armed bandit algorithms steer traffic to winners based on performance. They can incorporate user attributes like behavior or demographics into the algorithm that decides which version to show which user.

These tools have been around for years, using AI and machine learning to empower teams to find winners faster, or run continuous multivariate tests that show variations to the segments they perform well with.

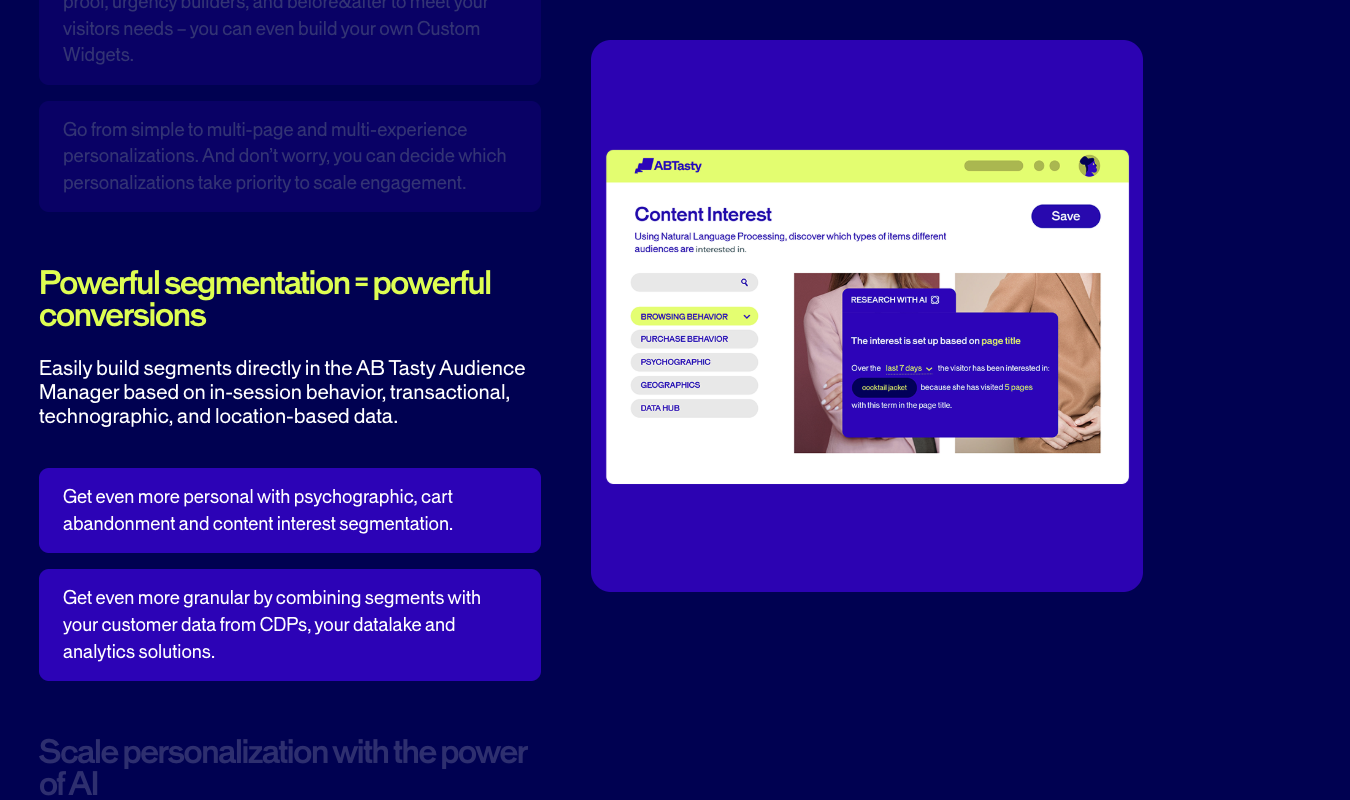

So what new value do AI A/B testing tools add? I often see it described by vendors as “hyper personalization,” which enables you to reallocate traffic during tests using a much richer set of behavioral and demographic signals.

What it can do:

- Automatically define user segments without manual input.

- Draw on multiple data sources simultaneously to decide which variation to show (e.g. CRM records, browsing history, or purchase patterns)

- Adapt variations and offers in real-time based on user behavior and website analytics

- Enable micro-segmentation, and with some tools, achieve 1:1 personalization at scale

Put simply, the newer tools can ingest a lot more information about your users to make decisions about which variation to show them.

Whereas traditional bandits allocated traffic with the sole goal of increasing your conversion rate, an AI A/B testing tool might consider multiple user attributes and behavior patterns to decide which variation to show.

Potential risks and limitations:

- Hyper personalization requires a large volume of creative assets, which is challenging to manage, and fully relying on AI to generate them creates its own risks.

- You must have high-quality, up-to-date customer data in a centralized source that the AI tool can access, which is difficult to orchestrate and may raise privacy issues.

- Results are harder to interpret because there isn’t a clear winner, and it’s not always clear why particular variations performed well.

- Privacy regulations (e.g. GDPR) may not allow you to collect the data such personalization requires.

While AI A/B testing tools can help your teams personalize tests with a greater degree of precision, there is no guarantee that the wins you find are going to be durable. The more granular the user segments, the harder it is to reach statistically significant sample sizes.

What performs well in a particular moment with a specific user may not provide a true win that you can scale out across your site, which undercuts one of the key benefits of A/B testing.

5. Interpret and summarize results

Any decent A/B testing tool makes it fairly easy to see which version performed better. But there has always been a good deal of manual work when it comes to understanding the quality of the results, digging into the segment-level data, and reporting those results in plain language to stakeholders.

AI A/B testing tools are phenomenal at synthesizing large volumes of structured data and reporting on what they find.

What it can do:

- Generate simple summaries of the test outcomes

- Highlight meaningful differences about variation performance

- Alert you to traffic segments with usually strong or weak performances

- Compare results to past experiments

- Suggest follow-up test ideas

- Draft shareable experiment reports

The benefit here is speed but also thoroughness, as the tools can surface patterns that a busy testing team might miss, especially when the top-line result isn’t statistically significant.

For teams that run dozens of concurrent tests, the assistance with analysis and documentation will eliminate a lot of post-test busywork. And for less experienced teams that are still learning what to look for, these features are even more valuable.

Potential risks and limitations:

- Summaries may include misinterpretations, hallucinations, or fabricated findings.

- Reports don’t necessarily capture the most meaningful business insights, even if they highlight statistically sound patterns.

- Failure to account for external factors in its analysis, such as seasonality, ongoing marketing campaigns (yours or competitors), or big industry news.

As long as you keep a human “in the loop” when it comes to analysis, most of these potential risks can be avoided. For example, I would double-check results that fall under Twyman’s Law before forwarding an AI-generated experiment report to a stakeholder who will never see the data first-hand.

Emerging AI A/B Testing Capabilities

The abilities we just covered are all here today, assisting teams with experimental work on live pages.

What we’ll look at in this section are two emerging capabilities used by frontier experimentation teams, being studied by researchers, and on product roadmaps.

Simulating tests with synthetic users

What if: instead of running live traffic through your test, you showed it first to AI agents who could interact with the page variations?

Live testing is expensive, time-consuming, and potentially very risky. If you test a new idea on a high traffic site and it completely bombs, you could lose out on significant revenue.

The basic premise for using AI agents instead of live traffic to simulate a test is that it is relatively cheap, very fast, and low-risk.

Under controlled conditions, advanced agents are capable of engaging with websites, entering information into forms, and completing multi-step flows.

These “synthetic users” can also be trained on data from specific buyer personas so that their website behavior is aligned with the real users or shoppers you want to study.

Running a simulated test can help you:

- Generate insights on pages with limited traffic

- Uncover bugs in the design

- Identify points of friction in flows

- Compare simulated behavior across multiple design options

And you would get these insights without ever having to expose real traffic to the variations you want to test.

These capabilities do not fully replace actually running a controlled experiment. No one working on tools for simulated A/B testing tools claimed that it does.

But they do provide recommendations that can help you prioritize tests and plan ahead. Recent research on simulated testing using LLM agents showed that, under controlled conditions, these models can pick up on real behavior patterns.

The authors of that study write, “Our position is that LLM agents should not replace real user testing” (emphasis original), but do argue that they can be useful for getting quick, low-risk feedback before running full experiments.

Fully autonomous AI experimentation

We’ve touched on a few of the different parts that AI A/B testing tools can automate, but what would it look like to have AI agents take over the entire testing lifecycle?

Some of the leading A/B testing platforms are starting to offer tools that get close to fully autonomous AI experimentation, and there are some newer AI-native tools working towards this goal as well.

I don’t think it will be long before we have AI agents:

- Crawling your site to find pages worth testing

- Developing their own hypotheses based on page performance and brand aligned goals

- Generating content and code to create variations

- Setting up and running tests

- Monitoring performance and shifting traffic in real-time according to conversion metrics

- Personalizing experiences for specific segments or even individuals

- Stopping the test once it hits statistical significance

- Generating post-test analysis and reporting key findings

- Creating variations for follow-up experiments based on results

- Repeating this process over and over, continuously optimizing a site

How far are we away from AI-led A/B testing like this?

Closer than you might think.

Tools today are already capable of automating each step of this process, so what stands between us and fully autonomous testing is a matter of chaining together existing capabilities.

And there are already teams running multi-armed bandit tests for continuous learning, letting ML algorithms shift traffic towards winning variations more or less indefinitely.

But of course there is still human oversight of these processes, something that I don’t see going away anytime soon.

Business ethics, brand strategy, and market forces play a leading role in whether or not a test makes sense to run or the outcomes are desirable.

While you can train agents to understand your strategy and brand, the risk posed by removing human supervision from the full experiment lifecycle is serious. It’s not hard to imagine a scenario where an AI agent generates content that converts very well but harms a brand’s reputation or bottom line.

My main takeaway from researching and thinking about AI A/B testing tools is that they can help intelligent, hard-working humans get more done. Where they can automate tedious tasks, fantastic, but they have a long way to go before replacing the talented teams that use them.