You just conducted customer interviews. Now what?

It feels like you have fascinating insights, but they’re trapped in transcripts and call recordings. How do you get from raw interview data to concrete benefits for your business?

I’ll cover the basics of setting up a customer interview program (it’s not as complicated as most guides make it out to be), but this post focuses on what happens when you are done.

A Quick Overview of Customer Interviews

A customer interview is a structured conversation with someone who uses your product or might use it in the future.

These are one-on-one conversations, where the goal is to better understand your customer, what they want, and how they make decisions. Here are some research objectives for customer interviews:

- Discovering challenges they faced that your product could solve

- Understanding the “why” behind actions like signup or cart abandonment

- Learning how customers perceive your product compared to competitors

- Mapping the buyer journey and actual steps they took to making a purchase

- Hearing issues that frustrate customers and friction in the buying process

Interviews are designed around getting customers to address topics like these, in their own words.

What you learn during interviews is translated into initiatives that help your business perform better. For example:

- We discovered that they really cared about getting set up quickly, so we revised the copywriting of our ads and site to lead with the idea that people could be “up and running in 20 minutes.”

- Customers shared that the biggest source of frustration was feeling abandoned after the sale, so we increased assistance during onboarding and built a 90-day check in sequence.

- We found out that no one was using the new reporting module, so we amended our product roadmap to focus more on the integrations everyone was asking for.

Making adjustments like these can help you get more people interested in your product, increase their satisfaction using it, and help you build what people need.

There’s usually a significant difference between what customers desire, and what brands think they want.

Customer interviews help you close that gap to ensure your team is doing work that better serves the people who pay their salary.

Types of customer interviews

Your research objective is best served by a particular type of customer interview, which in turn shapes who you should talk to, when you should talk to them, and what you are likely to learn.

Here is a breakdown of several widely-used types of customer interviews:

| Type | What it is | When to run | Who to talk with | What you learn |

|---|---|---|---|---|

| Discovery interview | Open-ended conversations that elicit how customers think about a problem and what they are trying to accomplish. | Entering a new market, launching a new product, or when you feel like you have lost touch with what customers care about. | Current or prospective customers that represent your best-fit buyer personas | The language customers use to describe their problems, goals, desires, and unmet needs. |

| Switch interview | Focused conversations about the moment someone chose your product. | After large deals close, ideally within 30-60 days of the decision while details are still fresh | New customers | What triggered the switch, alternatives they considered, and what tipped their decision in your favor. |

| Exit/Churn interview | Conversations with customers who left, focused on understanding why. | As soon as possible after a customer leaves | Recently churned customers | Unmet expectations, product issues, relationship break downs, and how you can better serve existing customers. |

| Win/Loss interview | Conversations with buyers in recent deals (won and lost) to understand what drove the outcome | After big deals close, ideally within a few weeks of the decision | New customers and prospects that did not close | What won/killed deals, how you are perceived compared to competitors, |

Say you are planning to expand into a new market and want to better understand the buyers. You can run discovery interviews with people who fit the profile you’re after to hear what they really care about and what their biggest problems are.

What you learn might lead you to revise your current messaging to better suit a new type of buyer, or it might inform you that your product is not actually a good fit for what this target market craves.

You don’t need to run a textbook version of any of these interview types in order to get value.

Borrow what you need from a format, adjust it to suit your objectives, and then follow the data where it leads. The important thing is going into an interview program with a clear question your business is trying to answer.

How many interviews do I need?

There is no perfect number. I have seen people say that you should aim for 20, but that seems high.

My experience has been that after around 6-7 interviews, you start hearing a lot of the same things: the same frustrations, pain points, and desired outcomes.

After that point, each interview starts to add less than the one that came before it.

I interviewed customers who used particular software products, like specific website builders and payroll software. Even though the customers came from diverse backgrounds and worked at very different companies, by interview number five or so, I started to feel like I was covering the same ground.

If your customer base is truly varied, or you are trying to get qualitative data from several unique segments, maybe you will need to run more interviews than I did.

I think 6-10 interviews is a reasonable starting point. If by interview 7-8, you are still picking up a lot of new information, maybe you should keep going.

Setting Up a Customer Interview Program

The first thing is getting buy-in from the people who you will ultimately present to, like the founders, owners, or leaders from sales, marketing, service, and customer success.

I’m not just talking about getting the budget approved. You want to bring these people in to shape the program.

They’ll have opinions about what questions matter, what you should focus on, and what a good interview participant looks like.

You will also learn who you can absolutely not talk to, like active prospects, accounts with a bad history, or certain customers who aren’t representative of a good-fit (even if they look good on paper).

Sales, in particular, can save you from a lot of wasted outreach. It’s going to be easier to incorporate their input early than it is to fix problems after you have already built a contact list.

In terms of setting up a program, I’m not going to give you a step-by-step process. There really isn’t one that’s guaranteed to match how this actually comes together in the real world.

Let’s just look at what you definitely need, and then you can figure out how to make it work.

An impartial interviewer

In an ideal world, you can get someone who has experience running customer interviews. They’ll know how to prepare, coordinate, and conduct these types of conversations from start to finish, while taking great notes and minimizing bias.

But if you can’t get a pro, get someone who is not a sales person. Interviews are not sales calls and if it turns into one, you are going to frustrate the participant and get zero useful data.

Beyond that, you have to make sure they are truly impartial. Don’t select an interviewer who is worried about getting negative feedback or how it might reflect on them.

Discovering flaws and shortcomings that customers perceive about your brand is great! You do not want to pick someone who is going to get defensive or hide that sort of feedback.

If you have more than one interviewer, calibrate them so that they are using the same language and asking similar kinds of follow-up questions. A little bit of training can minimize differences in the data that come from researchers rather than customers.

Interview policies and processes

The whole interview process will play out over a few weeks, maybe longer depending on how complex it is to source participants and get them approved. Staying organized is crucial.

Here are some of the docs and processes you should plan on lining up:

- Participant selection methods: Explains who you are recruiting, why they are a good fit for interviews, and any steps necessary to get them approved.

- Outreach templates: Outlines the emails or call scripts you will use to ask people to participate, and how you follow up with them after the interview.

- Contact list: Captures all the key information you need about the candidate, scheduling with them, and links to their interview transcripts. A simple spreadsheet is fine.

- Interview policy: States the rules, goals, format, and ethical guidelines for getting qualitative feedback via interviews. Participants may want a copy that explains what you are using interviews for, what you will do with recordings, and how you are protecting sensitive data. You may want to have internal and external policies.

- Dialogue guide: Outlines the basic format and structure of the interview, key areas to discuss, and any specific questions that all participants must be asked. Open-ended interviews are good, but you also want to make sure you are getting enough similar data points so that you can make comparisons across participants.

- Followup plan: Covers the post-interview process, including thank you messages and any agreed on check-ins. It may be valuable to run some of your planned initiatives by the customers you interviewed to see how they feel about proposed changes.

Tech and software

You may have all the tools you need already, but make sure that what you have is up to the task.

- Scheduling: You can get by with links to a Google or Microsoft calendar, but Calendly or something similar can minimize a lot of the back and forth that comes with coordinating interviews with multiple participants.

- Communication: Phone is great. Video conferencing software is great. Select something that your team is confident using and participants are likely to be comfortable with.

- Recording: Most video conferencing tools have recording features, which should work fine. Call recording software works for phone interviews. Be sure to get consent to record from participants.

- Transcription: Your conferencing or recording software may have transcription built-in, which may be good enough. Expect to do some cleanup, especially if you are discussing subjects with a lot of technical jargon, and consider using speech-to-text services to help you generate high-quality transcriptions.

- Storage: All of your transcripts, recordings, and interview notes should live in a single, secure place. Something like Google Drive or Notion should be fine.

- Analysis: You don’t need special software for this, but it can really help. There are lots of dedicated AI transcript analysis tools, though general AI tools like ChatGPT, Claude, and Microsoft Copilot are fairly powerful themselves.

Output

Decide on these ahead of time. What does “done” look like and who is going to see it?

By the end of the process, you will have three basic levels of output:

- Raw data: This is the unedited calls, call transcripts, video interviews, and notes you took during and after the conversation. It will likely have PII (Personally Identifiable Information) in it and needs to be stored securely or destroyed at a later date, according to the interview policy

- Curated data: This includes tagged transcripts and should be anonymized so that it can be used later as a customer intelligence artifact. It contains more information than you would want to share in a report, but could be useful for future content development, brand guidance, or as training data for AI customer service agents.

- Detailed report: This is what you will present to stakeholders and share with teams. It’s polished and covers the major, actionable insights you discovered during interviews. Consider putting together an executive summary for people who need the headlines without the detail.

A few customer interview tips

There’s lots of guidance for conducting interviews out there, most of which repeats the same fairly common sense stuff, like ask open-ended questions, be an active listener, and don’t try to sell your product.

I think it’s all fine and maybe you want to read up on the guidance if this is your first time ever running any sort of interview.

What I’ll add here are a few things that I think are important that I didn’t see the general tip lists cover.

- Do a dry run with a colleague before your first real interview. You can make sure the recording setup is good, that you feel confident kicking the call off, and work out any of the operational kinks.

- Get great audio quality. On your end, make sure you have a quiet location and a good microphone. Encourage participants to do the same. If they are quiet or their mic is lousy, ask them politely to speak louder or slower. The better the audio quality, the cleaner the eventual transcription will be.

- Be friendly, not too formal. My best interviews have had some laughs, and felt like we were chatting at a restaurant vs. in a business meeting. Avoid using jargon and buzzwords, if you can help it.

- Start with some easy, personal questions. Get to know them a little bit in the first five minutes. It warms them up to you, establishes a bit of rapport, and may provide you with some real life details that will enable you to frame later questions more personally later in the interview.

- “Can you say more about that” is an amazing question. Most customers have never thought about the questions you are asking, and this gives them another shot to think through their answer.

- Ask who else they think you should talk to. Even if you don’t need a referral for additional interviews, you might be surprised by what they say.

- Take some notes immediately after the interview. You’ll always have the recording, but what you felt during the call is fleeting. Little insights and emotions will fade quickly, especially if you are conducting another interview shortly thereafter or rushing on to another task.

What To Do After Customer Interviews

This section lays out the basic steps you need to take to get from raw interview data to a clear and useful presentation.

In my own experience running customer interviews, this has never been a perfectly linear process. Things that seemed important in early interviews turn out not to be so consequential, and what I discovered in the later interviews has changed my perspective about what I heard before.

1. Prep transcripts for analysis

Even if you pay for a service to transcribe interviews, there is usually a little bit of work on your end to clean the transcript and make sure that you have accurately captured the conversation.

Call transcripts are naturally messy. There’s “umms,” inaudible words, half sentences, and lots of “you knows?” It’s up to you how to handle these moments, just be consistent about how you deal with them.

When you are unsure what people said, you can go back to the recording and slow down the playback speed. That helps a lot.

At this point, you can also anonymize the transcripts, removing PII like people’s names, their kids’ names, company names, and other details that could be used to identify someone.

2. Code the transcripts

Coding, in this sense, is the process of tagging themes and content of interest that came up during interviews.

Basically, you are looking for words, phrases, and larger concepts that deal with the same idea. Label or tag them in a way that makes sense and helps you analyze all of the interviews through a standardized lens.

There’s no one right way to do this, so don’t overthink it. Typically, people are looking to code things like:

- Desired outcomes

- Pain points

- Decision triggers

- Objections

- Competitor mentions

- Emotional language

Try to be as consistent as possible with how you code. You went into interviews with specific questions to answer. Your coding framework should reflect those research objectives.

I would go through each transcript more than once. You will definitely catch things you missed on the second pass.

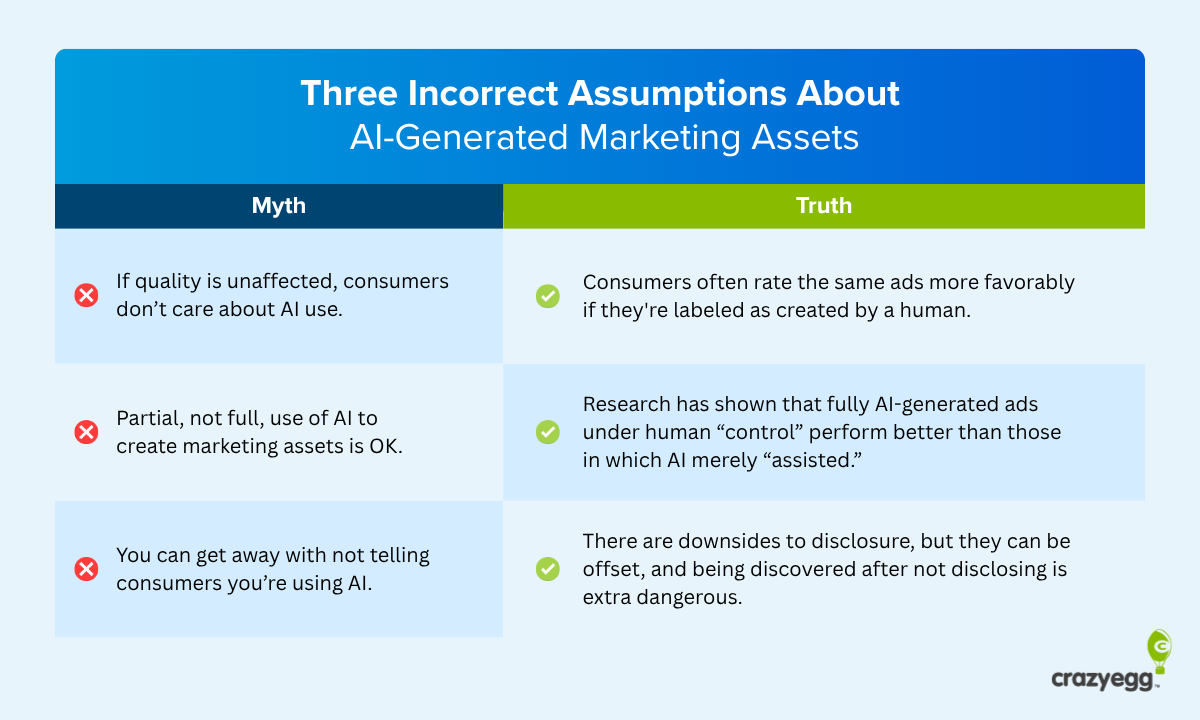

AI tools can automate parts of this process, but I would manually code each interview at least once. Such sentiment analysis tools are really helpful, but they’re liable to miss some nuance. With supervision, they can help you do a better job faster, but I would be wary of letting them run the entire coding process.

3. Find meaningful patterns

With all the transcripts coded, it’s time to look for things that came up repeatedly and themes that emerged across multiple interviews.

You can do this by pulling all the sections for each code into a separate document and grouping them. For example, you could collect all the “pain points” in one group and all the “desired outcomes” in another.

From here, you are looking to find points of commonality between what different participants have said. For example, say 7/10 of interviewers highlighted slow deployment as a pain point. That’d be something to pay attention to.

This is another part of the process that AI tools can help with. Upload all of the coded transcripts and ask for themes. I’ve used both ChatGPT and Claude for this type of work, and they do pretty good work. I would recommend using them as a starting point for analysis.

4. Present your findings

Your goal is to offer data-backed answers to the research questions that kicked off this process. This usually entails a short report or presentation where you highlight what you learned, what it means, and where you should go from here.

Essentially, you need to distill dozens of hours of customer interviews into a short, readable, shareable document that people can understand at a glance.

I would focus on a few core claims that speak directly to your research questions. Support those claims with a mix of quantitative data and qualitative customer insights. For example:

- Claim: Customers found our pricing page confusing.

- Quantitative support: 6/10 customers said they had trouble understanding what features were included with the base plan.

- Qualitative support: One customer said, “I had to talk myself into trusting you guys. It felt like every feature had an asterisk.”

Don’t overload your readers with too much. A short summary, a bullet list of the key findings, a few spicy quotes, and maybe a chart or two. That’s great. Aim to make the implications of what you discovered as clear as possible, even if the next steps are not your responsibility.

A lot of the best quotes and meaningful patterns are not going to make it into a short presentation. That’s fine.

You can always put together additional documents and share them with other teams. Content might want a good bank of customer quotes that highlights desired outcomes, for example, or sales might want to have a set of real-life objections to use in their training materials.

Translate Customer Interviews Into Action

Ultimately, the value you get from running interviews comes from the real-world changes you implement in your conversion funnel, sales messaging, and potentially in your product.

Here are some common places where you can apply interview insights:

Top of funnel content: People told you what their biggest problems were and what they hoped to achieve. Copywriters can use these real sentiments to connect emotionally with potential buyers to write better headlines, ad copy, and social media microcontent.

Middle of funnel content: You found out the real objections people have to using your product, and where its potential benefits confuse them. It’s worth making sure that landing pages and educational content speak clearly to those issues.

Bottom of funnel content: You probably learned how customers weighed your product vs. competitors, how they felt about the pricing, and points of friction they encountered in the buying process. Where can you incorporate this feedback to make sure you’re making the most compelling case for your positioning, pricing, and checkout?

ICP and buyer personas: Revisit and update assumptions about demographics, firmographics, use cases, as well as the roles and job titles involved in the buying decision. Have you been actively targeting the right people, segments, and companies?

Sales copy. Update call scripts and cold email templates to better reflect the language that buyers use and how they are thinking about a potential purchase. Do you immediately address the important benefits and frame them in ways potential buyers understand?

Sales collateral. Check whether sales sheets and leave-behinds have foregrounded the most important buying considerations. Are the most urgent pain-points front and center?

Product feedback. Did people mention workarounds they needed to use your product effectively? Did they mention features they wished you had or others that they hardly used? This type of feedback may contain useful signals to inform your product roadmap.

Validate Customer Interview Insights

What people say and what they do are not a perfect match.

So before you redesign everything based on what you learned during interviews, it’s worth checking whether or not the claims are borne out in your customer engagement metrics.

Heatmaps and session recordings can help you see if people are really engaging more with the types of content interviewees told you was important. Look back at these types of behavioral engagement data to get confirmation that the interview insights are valid.

Once you make changes, compare heatmap data and session recordings with pages you update. Are you seeing increased engagement?

A/B testing is the most direct way to find out if redesigns based on interviews are beneficial.

Update a headline based on customer language. Test it against your existing headline. Does it actually perform better or not? Revise a pricing page to clear up the confusion people said they experienced. Does it increase conversion rates?

Customer interviews give you a strong rationale for what to do and why. But at the end of the day, the data will tell you whether or not your changes are having the real-world impact you want.