What happens when you put powerful coding tools in the hands of people who don’t fully understand their outputs?

This scenario is now playing out. Vibe coding has made it possible to ship entire apps without even talking to a developer. Yet there seems to be little understanding of the dangers, especially among businesses without in-house dev expertise.

To better understand the risks, I interviewed three experts. I asked them about the underlying technology, what’s at stake, and, crucially, how smaller businesses can protect themselves.

What Is Vibe Coding?

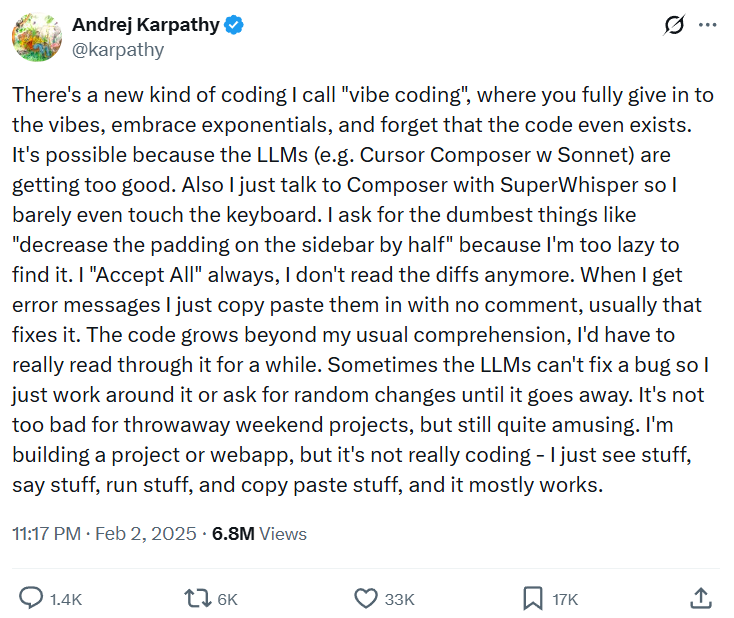

The phrase “vibe coding” comes from Andrej Karpathy, a highly regarded AI researcher and one of the co-founders of Anthropic (he’s since left the company). He first used it in an X post, where he described it as “not really coding.”

Vibe coding works in much the same way as other forms of generative AI. The user enters a prompt into their chatbot of choice. The LLM then draws on its vast corpus of training material to generate a new codebase or modify an existing one.

Popular platforms like Claude Code (Anthropic) and Codex (OpenAI) package their genAI engines in a suite of developer and agentic tools. This allows them to do things like access files in a local environment, execute multi-stage workflows, and interact with third-party platforms like GitHub.

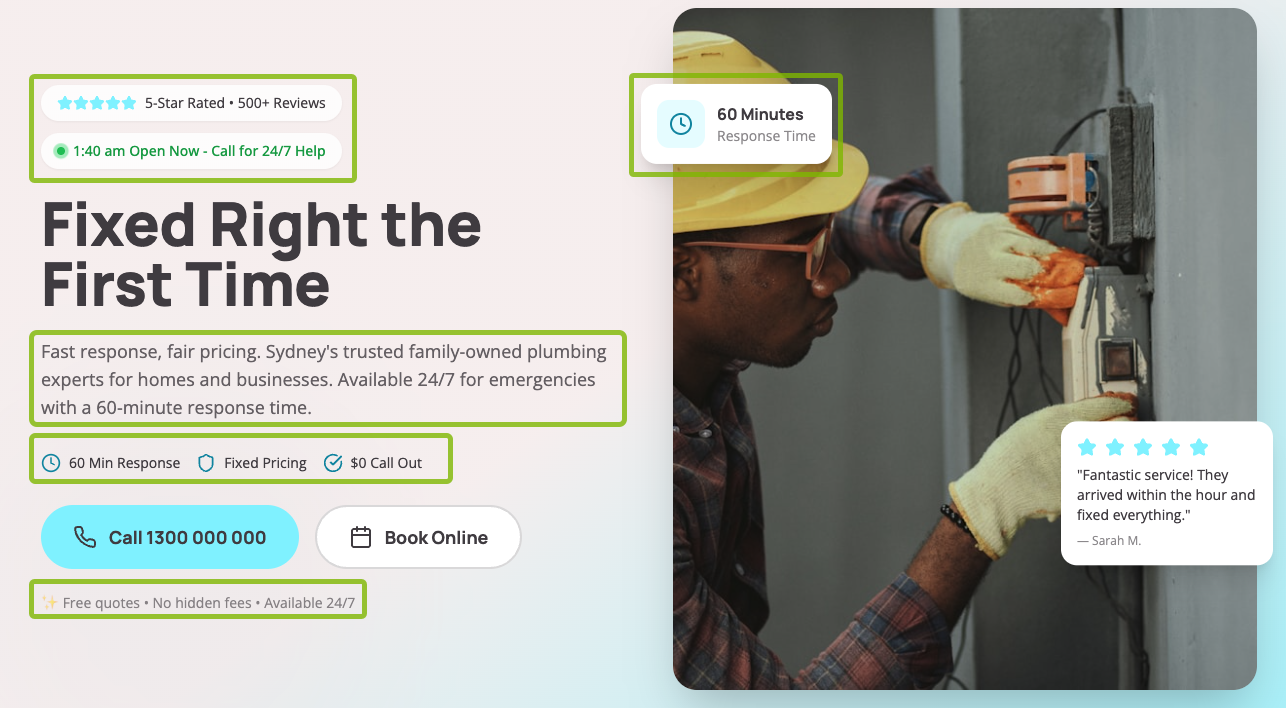

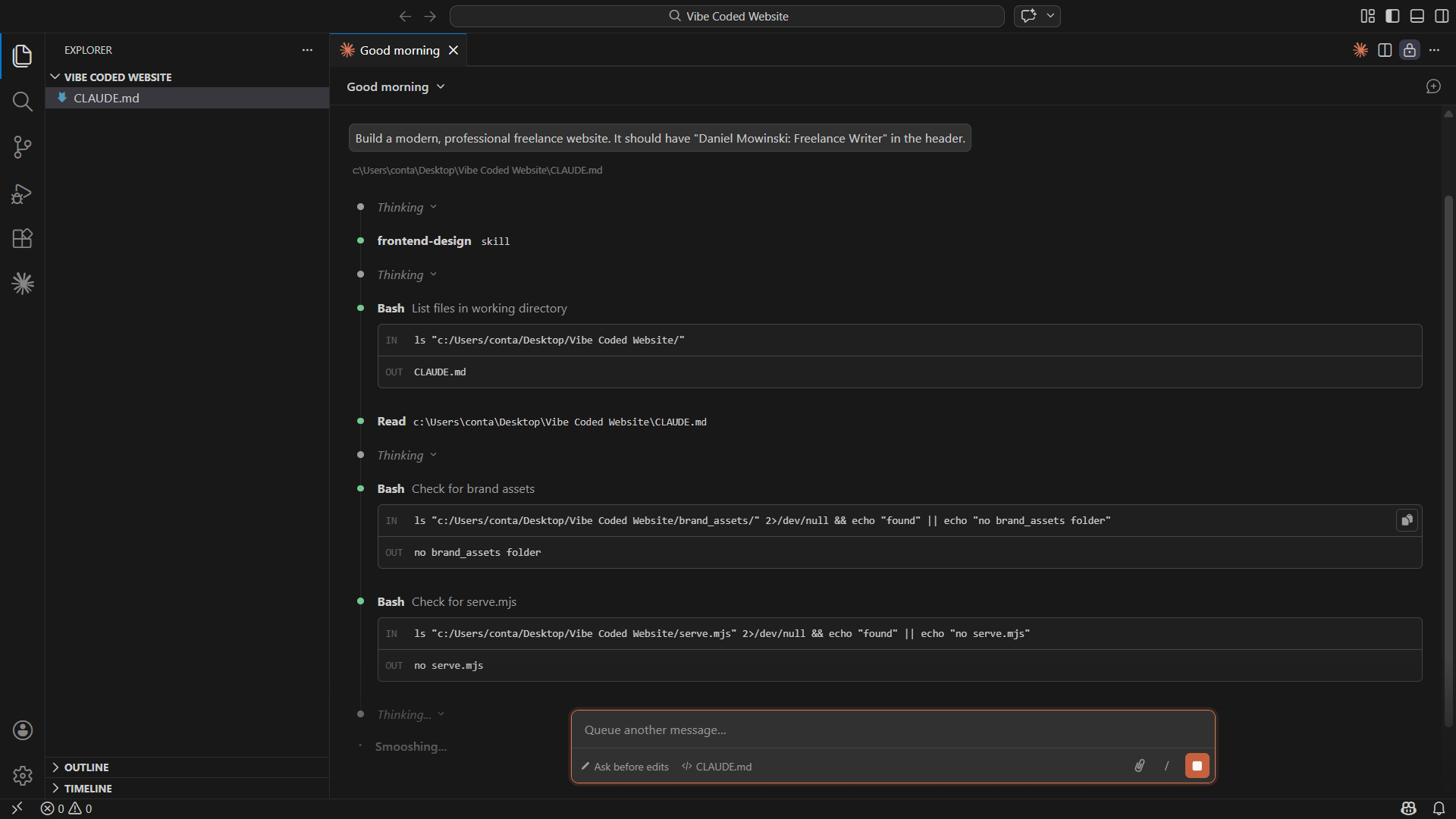

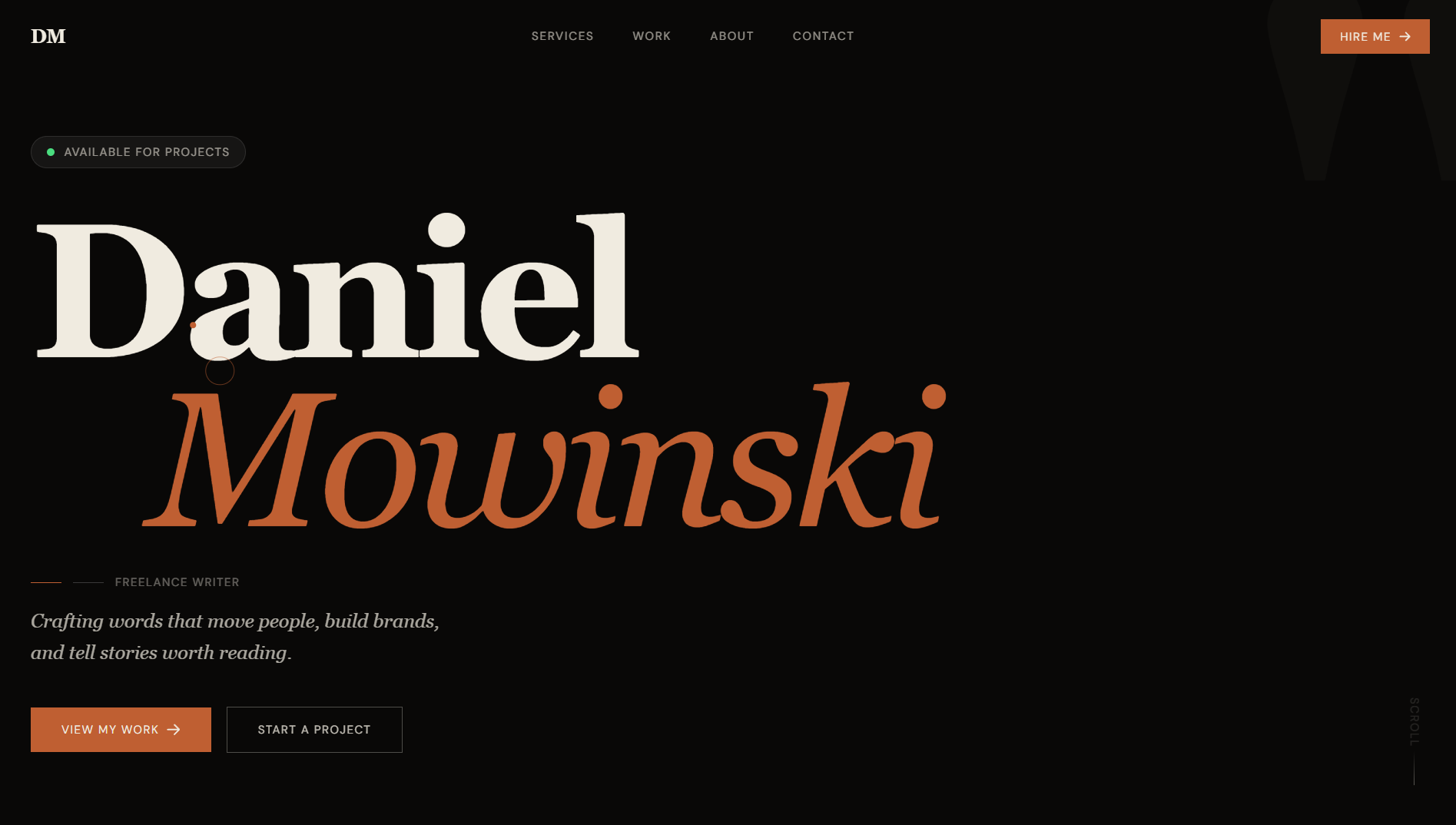

Here’s a quick overview of how easily I (a non-developer) can create a website in minutes. I simply open up Visual Studio, install the Claude Code extension, configure a CLAUDE.md file with my instructions, and ask for a website.

I can then check the output in my local environment, make changes as needed (Claude will modify the code directly), and upload the files to my chosen host.

There are more use cases than you can count. Personal websites, content management systems, data management systems, full-blown customer-facing apps—the list goes on.

How Widespread Is Vibe Coding?

Short answer? A lot of people are doing it. There’s no sign of the trend slowing, either.

Jellyfish reported in AI Engineering Trends that 67% of software engineers are using AI tools in some way. They also found that 14% of pull requests (proposed changes to existing codebases) were generated autonomously by AI agents.

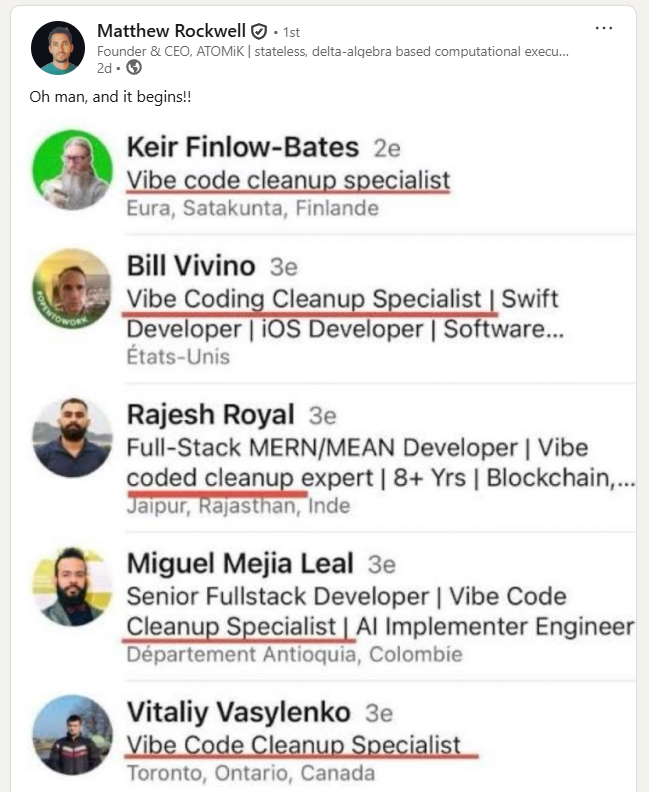

In early 2025, Silicon Valley startup accelerator Y Combinator said that a quarter of apps in its winter cohort had codebases that were 95% AI-generated. We’re also seeing the emergence of a new role: the so-called vibe code cleanup specialist.

What is especially interesting, however, is uptake among non-technological and low-dev-expertise businesses.

According to the2025 Empowering Small Business report by the U.S. Chamber of Commerce, one in five small businesses use genAI coding tools. In addition, a survey by Pax8 found that 62% of SMB leaders believe AI adoption is essential for remaining competitive.

Small and medium businesses are using coding tools to ship features for in-house and external apps. Even more worryingly, this is happening in a context of hypercompetition and ongoing pressure to increase speed to deployment.

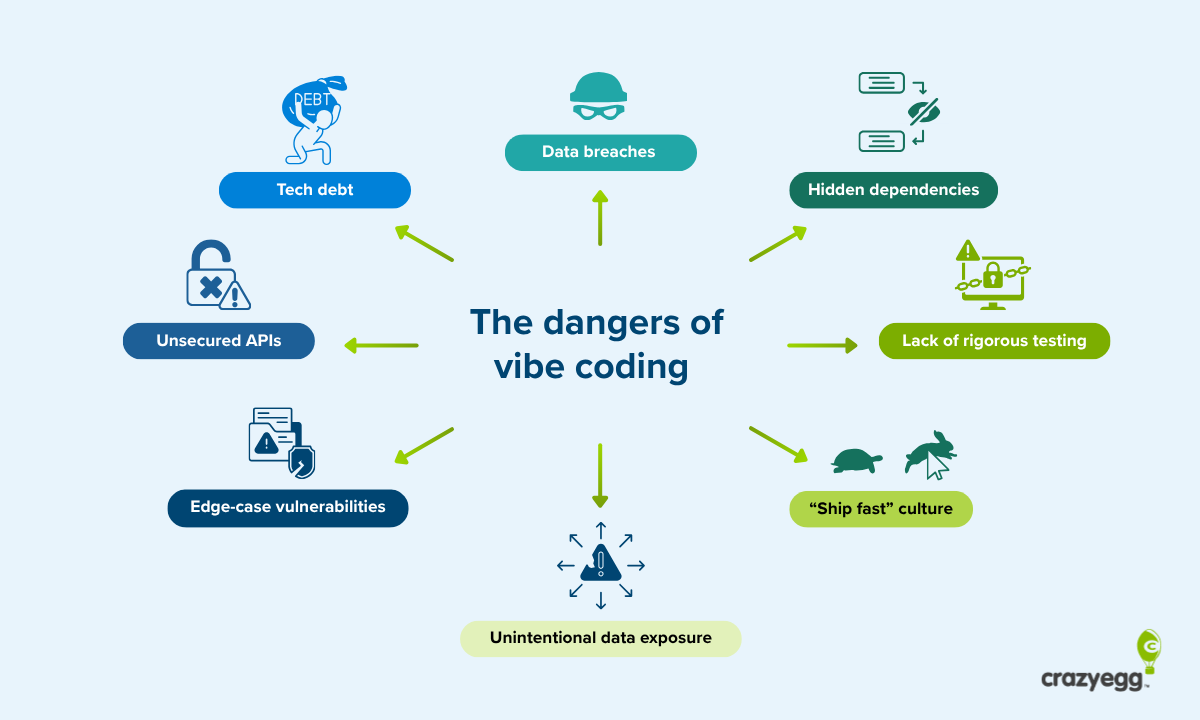

All of this makes it unsurprising that a smorgasbord of vulnerabilities has started to appear. In late 2025, Escape surveyed 5,600 publicly available apps built with vibe-coding platforms. They uncovered over 2,000 vulnerabilities. In certain cases, highly sensitive data like medical records were exposed.

I took these concerns to three experts who work with advanced software on a daily basis. I asked them how much of a risk these tools present and what SMBs in particular need to do to avoid potentially serious problems.

Amy Gottler, PhD: Communication Is Essential for Filling Knowledge Gaps

Amy Gottler is an e-learning consultant who works on complex education systems. She is the founder of eLearning Academy and holds a PhD in Technology Enhanced Learning.

When I spoke to Amy, she was keen to point out that she’s not anti-AI. “They’re absolutely amazing tools,” she said. “The issue I’ve observed, especially working with small teams, is that a lot of people don’t know what they don’t know.”

Data exploits are a real risk for small businesses

She explained that serious risks emerge when small businesses start collecting data, especially if they’ve gained confidence from using AI to help with tasks where there’s limited scope for things to go wrong.

“If you’re a small business and you want to create a one-page website, you could do that in less than ten minutes. In this case, the risk is quite low. You put it in a prompt, and you’ve got your website.”

Amy used the example of a hairdressing salon to illustrate the dangers that arise when somebody starts collecting and integrating data. “The next step for the business owner is to say, ‘I used that brilliant AI tool to create a website so quickly. I’m not going to pay for an application to take bookings or reservations. I’ll just try to create one myself.’”

The hairdresser now builds a database. They can capture users’ names, email addresses, and potentially credit card information when they’re booking an appointment. “If you don’t know how programming works, you could, for example, be creating a database where passwords are stored in plain text without encryption, which means that hackers have a nice open door to all your customers’ data.”

Why vibe coding creates unnecessary tech debt

I also asked Amy about her direct experience working on learning management systems (LMS) through her consultancy business. It’s an interesting case because the in-house professionals responsible for managing these systems often have some programming experience but lack highly specialized expertise.

“I’m seeing that people will often have some familiarity with HTML, CSS, and JavaScript. They use AI tools to take their outputs to what they see as the next level, but they don’t know how to run full tests. So they launch a new feature, and it causes issues. Over time, other things on the system stop working. This isn’t as much of a risk as a direct data exploit, but it’s an annoyance because it means you have to troubleshoot what’s going on and find the rogue code.”

The problem here is tech debt. A feature appears to work when it’s first implemented. But whoever is responsible for implementing it doesn’t understand the broader context of the system. Because the new features aren’t fully supported, a simple update is enough to break existing dependencies or introduce a string of small security vulnerabilities. This creates a time and cost drain at a later stage when the problem needs to be fixed.

“In larger organizations, you have what I call semi-developers. These people are more involved in building frontend functionality, and they’re the ones who are more likely to be a little bit more adventurous with vibe-coding tools. In attempting to make the system go further, they can unknowingly introduce security vulnerabilities, cross-stack compatibility issues, and hidden dependencies.”

Businesses need to be cautious about cutting budgets

We finished by talking about another worrying trend that Amy has seen in her work: companies reducing tech budgets and replacing developers with AI. She argues that this impulse on the part of budget-strapped businesses needs to be treated with a great deal of caution.

“I see some companies starting to say. ‘We’re going to replace people with AI.’ And with tight budgets, I can understand that line of thinking. But it’s where mistakes start to happen. If companies are adopting these tools, there needs to be proper governance behind it. Not only do you need to vet the tools that are being used to make sure that they’re appropriate, they need to be set up properly,” she explained. “So if you’re giving your employees licenses to use them, it’s important to ask if those licenses have been set up so there’s no data sharing.”

She finished by making the case for employee education, an area that is often deprioritized. “I think there’s also a user education element,” she said. “You shouldn’t blindly use these tools. I think this is everything in IT. Security training should be provided and enforced.”

David Mytton: Don’t Confuse Vibe Coding With Serious Agentic Engineering

David Mytton is the CEO of Arcjet, a security platform that provides real-time guardrails for AI apps. He is also conducting PhD research on sustainable computing at the University of Oxford. Console, his weekly digest for experienced developers, goes out to more than 30K subscribers.

David began by describing how he thinks AI coding tools have altered the traditional development workflow. In his view, this change has led to greater speed and lower costs but has also created genuine security risks.

How AI coding is consuming the middle “implementation phase”

“There used to be three phases of development,” he told me. “There was upfront planning, which happens before you write any code. There’s the middle implementation phase where you write the code. Finally, there’s the end phase for testing and making sure everything works before deployment. These three phases still exist, but the middle phase is no longer run by humans, or at least it won’t be in a very short period of time.”

He explained how, in this new model, developers add value by reviewing the initial roadmap, coming up with ideas, refining the application with the coding agent, and then, at the end, verifying everything actually works. “The middle bit, the code bit, is where all the value was,” David said. “Now, instead of the human writing the code, AI is taking over. This delivers speed. It also makes code cheaper. You can generate lots of different ideas and throw things away that you don’t like or don’t work.”

What’s not to like? Fewer costs, less time to deployment, and plenty of space to try new prototypes. It all sounds perfect, at least until you acknowledge the lingering issue that you can’t fully trust AI. “While AI is very good at coming up with coverage of all the different use cases and edge cases that you might have,” he said, “it’s going to make decisions that can open up security holes, either because things have been implemented in an insecure way or because known security issues aren’t addressed.”

This has added a new element, the testing of AI edge cases, to the final phase described above. And it’s where an experienced developer is non-negotiable. “The problem is you don’t know about edge cases unless you’ve experienced them in the past or you have knowledge of this field. AI is going to introduce potential security vulnerabilities without anyone understanding.”

The difference between vibe coding and agentic engineering

David was careful to draw a distinction between vibe coding and true agentic engineering: “Agentic engineering involves running the three phases of planning, implementation, and deployment after proper validation. Vibe coding is different in that you’re just prompting the AI to do things and, often, deploying to production without rigorous testing or any testing at all.”

“I think there’s more vibe coding than agentic engineering right now,” he went on to say, “and I think that’s because of the kind of developers who are adopting these tools first. The more experienced engineers are skeptical. They’re adding AI to existing workflows, which maintains the three traditional phases. The less experienced engineers are going as fast as they possibly can, releasing things without thinking about it. That can be an advantage in certain circumstances, but it has a lot of inherent risk. The fact that Anthropic and OpenAI have both recently released built-in security tools for their coding agents proves that this is a real problem.”

One of the recurring themes of our conversation was the speed at which new code is being deployed. David has seen first-hand how velocity has increased. And it’s something he suspects has spread to the enterprise sector, in significant part because of competitive tensions. Interestingly, he said that he has heard of developers being given a remit to work on projects using AI outside of organization guardrails. These so-called “tiger teams” have mandates to ship features as quickly as possible.

AI tools can’t build secure apps on their own

Why can’t AI tools simply run their own security checks? I posed this question to David. He explained that there are three interlocking factors at play:

- The inadequacies of LLM training materials

- The subtleties of security issues

- The absence of advanced infrastructure needed to secure apps on an ongoing basis

“If you think about all the code that exists, we already have security vulnerabilities,” he explained. “Humans haven’t created perfect code. AI is trained on that imperfect body of work, which means it’s going to reimplement existing patterns of insecure code.”

The issue is further compounded by the fact that many of these security flaws aren’t obvious. “There are lots of subtleties in how these protocols work. Basic issues are quite rare because it’s more the case that there are small flaws in the way something is implemented. This can result in a chain of multiple vulnerabilities that can be exploited to access a system. It’s very rare that there’s going to be one single issue like failing to implement authentication correctly. And you need to draw on different, nuanced approaches to identify subtle bugs. These are what AI struggles with.”

David argues that the solution is a mix of expert human review and strict, automated guardrails, both of which are lacking in the industry. “It’s just no longer possible for humans to review every single line of code. Certain code will need review by humans in the most sensitive areas, things like payment flows, authentication, and other really critical parts of the application. But we also need to provide safe rails for people to implement common functionality.”

This is the underlying philosophy of Arcjet. His company provides a series of building blocks that allow developers to bring common security functionality into their application without having to re-implement it from scratch. “A simple example,” David says, “is that we have bot detection that identifies automated scrapers and prevents issues like spam sign-ups and automated abuse of your application. We maintain multiple threat feeds with real-time data coming from multiple providers and visibility across thousands of applications deployed on the product. We have all sorts of heuristics that go into the detection of not just known threats but emerging threats that happen over very short periods of time.”

This approach highlights the very real shortcomings of asking the tools themselves to take care of security. “We have to do an array of things behind the scenes to maintain a product. If you ask ChatGPT to stop bot signups, it will implement some very basic protections. But it’s not going to have the sophistication that we’re able to bring to the problem. And that’s just one area. There are many others like:

- Secure logins

- Dealing with cookies correctly

- Managing personal information across jurisdictions to deal with the different privacy legislation

“Vibe-coders using AI to account for these risks will see a lot of incorrect and insecure implementations.”

Matthew Rockwell: Vibe Coders Can’t Find Subtle Vulnerabilities

Matthew Rockwell is the founder of ATOMiK, where he is developing hardware architecture that minimizes data movement by updating only state changes (deltas). He previously worked as an advanced manufacturing engineer at Keysight Technologies, where he specialized in database management for industrial IoT applications.

As somebody with wide experience of mission-critical systems, one of Matthew’s main concerns is that non-experts can’t reliably stress-test the data security of their vibe-coded apps.

Vibe coding will be big, but it needs guardrails

Like everybody I spoke to, Matthew was eager to point out that he’s not against vibe coding in its entirety. In fact, he thinks it opens up exciting opportunities for people with limited experience who are working on app and website frontends.

“When it comes to vibe coding and programming in general,” he told me, “there’s always been two avenues: the frontend, the UI and UX, and the backend that makes everything work. I think people who are more creative and artsy tend to enjoy the former part more. That’s why vibe coding has really taken off and why it’s ultimately a good thing. Somebody who doesn’t have a deep programming background now has the tools to be artistic.”

So far, so good. The issue is that this freedom creates a set of problems that traditional dev workflows aren’t designed to fix. “It’s possible to create UIs that traditional programmers never would have even thought of, and that’s creating a new set of backend challenges, particularly around data. We saw with OpenClaw exactly what could happen when you have exposure to private data.”

The OpenClaw saga he’s referring to is one of the most significant AI security crises of 2026. OpenClaw lets users build autonomous AI agents that can exercise significant control over local systems. A number of serious issues have emerged, including the distribution of malicious skills via the OpenClaw marketplace, a release bug that allowed hackers to hijack browser connections and control local instances, and a large database exposure via Moltbook, a social media network for OpenClaw agents.

One of the basic issues is unsecured two-way gateways

One of the main problems with vibe-coded apps revolves around poorly secured two-way gateways. These are bidirectional interfaces that allow for the exchange of data. APIs, which give users access to backend services, are a well-known example.

“I think the biggest issue,” Matthew told me, “is that vibe coders don’t understand how to create virtual environments that secure data locally and prevent it from extending beyond an encrypted router. A lot of gateways are two-way gateways. And because a vibe coder wants a new feature, they allow this gateway to exist without thinking about what someone else can do with access to it. If you don’t have a strong virtual sandbox environment, you won’t be able to test applications offline before pushing them into production. You can’t see and understand what somebody who’s looking to perform malicious acts is capable of.”

He uses the example of a radio channel to illustrate the common misconception that data only moves in one direction: “It’s like I’ve created a radio, but I’m under the impression that the radio is a one-way radio. I can talk, but nobody can listen. But that’s not the reality. The reality is you are creating an exposed environment. And the danger comes from storing private information, even locally, in a way where it isn’t secured and is therefore completely visible. It’s basically presenting customer data to hackers on a silver platter.”

Matthew isn’t pessimistic, however. He thinks this problem will lead to the emergence of a new role: the infrastructure engineer. “There’s going to be a serious need for professionals who can set up robust, safe testing infrastructures. They’ll also come up with creative solutions to hurdles like insufficient context windows and reliable multi-agent orchestration. The push I’m seeing, and I think this is integral to being able to have your employees build features that ship quickly without security vulnerabilities, is to fully re-orchestrate the way data is stored and have the underlying database infrastructure where it really needs to be.”

Only experienced developers can try to hack systems effectively

In his work with complex data storage systems, Matthew spent a lot of his time testing edge cases. These are unusual situations that sit on the “edges” of expected user behavior and inputs. They can be a source of subtle, high-impact vulnerabilities and are often the focus of malicious actors.

“When you’ve dealt with user data and you understand the privacy component thoroughly, you know that it’s not as trivial as vibe coding,” he said. “It’s not as simple as building something and putting it out there. When you’re dealing with user data, you’re testing edge cases and essentially trying to hack your own system. That’s something only an experienced programmer knows how to do. A vibe coder is unlikely to even be concerned about it.”

The obvious danger is small and medium businesses that don’t have any understanding of the high-level, complex skills that hackers regularly employ. But larger businesses might also be at risk.

“Especially when you’re in industry,” Matthew explained, “you’re holding on to confidential information that can lead to a catastrophic situation if it’s revealed. You’re talking job losses, long-term brand damage, and even legal action. A lot of industries haven’t even adopted AI yet because they don’t feel that the security is there. When you’re dealing with that level of sensitivity, you develop a lot of respect.”

This is at odds with the “ship at any cost” mentality that dominates so much of the current approach among companies. “With vibe coding, everything seems to be on more of an output basis. People are thinking, ‘What can I get out there? What can I build today? I want to build something fast and I want to get it out.’ But when you start dealing with secure and private information, you need to be beyond cautious.”

How to Vibe Code Safely: 4 Tips for Non-Experts

There were several recurrent threads that came up in my discussions about vibe coding. Data exposure presents the core risk. How testing requires in-depth expertise. The likelihood of major security breaches in the coming months.

At the same time, however, there was a lot of optimism. Despite the risks, these are democratizing tools. When used safely, they open up a realm of opportunities for non-developers and small and medium businesses.

1. Define your blast radius

When I asked him how businesses can protect themselves from security breaches, David Mytton explained the concept of the “blast radius.”

“What we’ve been doing at Arcjet is to categorize our code base based on the blast radius and the risk levels of the different areas.”

A blast radius is a prediction of how much damage a potential breach can cause. A homepage bug, for example, is an annoyance but not a mission-critical problem. If there’s a security bug in a login flow, on the other hand, then that’s a serious issue. You should apply separate rules to the different areas of your codebase. This way, developers can vary the rigor of their reviews accordingly.

“You can get pretty granular in large code bases to fully understand the risk levels and to know whether it’s fine to have code go out automatically with basic checks versus having humans come into the loop,” David explained. He described it as “the first step is to safely enabling the velocity that AI is bringing.”

2. Ensure tight alignment between frontend and backend teams

If you have a dedicated backend team, you can prevent a whole host of problems by ensuring that anybody working on the frontend can communicate quickly with IT and dev professionals. Small frontend changes tend to be less risky, but they can be responsible for costly tech debt. Simple checks can go a long way in preventing this.

Amy Gottler emphasized this point: “People at the front think, ‘Oh yeah, I use this system, but I don’t like this bit. I want to make it better. How can I now change it?’ In these cases, there needs to be good communication between the back-end IT team and the people who are actually in charge of managing and maintaining the day-to-day platform.”

3. Use third-party databases tools

If you’re set on adding advanced functionality to vibe-coded apps, use third-party tools with established security credentials. Databases are the obvious example here. But it applies equally to authentication providers, payment processors, file storage services, and messaging APIs. Anything that handles sensitive data or system access.

Matthew Rockwell regularly gives this advice. “When I’m talking to vibe coders, I do recommend using third-party established back-end databases. They’ve invested billions of dollars into encryption methods. You can pull APIs to create data-passing structures with their security enabled through your own user interface. Don’t stop vibe coding; enjoy it. Just don’t try to secure data on your own terms.”

4. Don’t allow AI to implement core security features

Don’t allow AI to implement core security features from scratch. These are the parts of an application that determine who gets access to what and how sensitive data is protected. If they fail, the consequences are usually severe.

Developers should rely on established libraries and managed services for authentication, payment processing, API access control, encryption, session handling, and secrets management. These tools have been tested at scale, reviewed by specialists, and are updated when new threats emerge.

David Mytton gave a particularly relevant example: “There is a common rule that developers should never write their own cryptography. There are very few people in the world who can write secure cryptography. And that’s why everyone always uses one of the few standard libraries that are available. I think that’s going to become the case for other areas of the codebase as AI use continues to grow.”