Full disclosure: I’m not a developer. I don’t code.

And yet, as a digital marketer, I find myself looking at the source code of websites all the time.

When I’m in marketing analysis mode, one of the first things I’ll do when I hit a client’s site is hit option + command + u on my Macbook’s keyboard. Instantly, I’m face-to-face with this:

It doesn’t matter how many tools you have for SEO campaigns at your disposal, at some point you’ll wind up looking into the source code of a website either to check on a specific item or to conduct a larger SEO audit.

It’s a smart practice for any marketer.

If you know what to look for you can find opportunities for improvement as well as any SEO errors that could create problems with organic visibility down the road.

Do you need to be a web developer to be able to read the code? No. Just some basic understanding of code and SEO elements are enough to make sure things are working as they should.

The source code is important because it’s what makes your website display and function the way it does. It works the same way with other kinds of software, like video games.

The code behind the scenes is necessary to drive the functionality, mechanics and animation of those games. If you want to make sure everything is doing what it’s supposed to, you go to the source.

Viewing the Source Code

Pulling up the source code is relatively simple, and there are a number of ways to access it depending on your platform, browser and operating system. Here are a few ways:

- If you use a CMS like WordPress, you can access all your template files within the admin dashboard. The same applies to many ecommerce platforms like Bigcommerce and Shopify

- Right click with your mouse inside of the tab you’re working on and select “view source”

- Press CTRL+U to open the source in a new tab (Windows users)

- Press Option+Command+U to open the source (Mac users)

Keep in mind that while you may be able to view the source in full, some platforms may limit what you can change. If you use a hosted platform like Shopify or Bigcommerce, Wix or similar hosted CMS, you will be limited in what you can change within the source code.

Here are key reasons why I dive into the source code of websites:

1. Slow Load Times and Excessive Scripts

Nowadays site owners utilize a variety of scripts to add a lot more functionality to websites. In many cases these are JavaScript. It’s fairly common to inject those scripts near the head of the content so they load early on as the page loads up.

Unfortunately, front loading the scripts can create a tremendous amount of latency in getting the entire site to load, and that’s a problem – especially when you have a lot of scripts.

For every additional second of load time, you could see as much as a 7% decrease in conversions as people bail from your site.

Ouch!

Checking the scripts on your site is important because you might find scripts that aren’t even utilized anymore still sitting in your source. This can cause additional errors and will slow down that load time unnecessarily.

I recommend moving scripts to the end of the page, so they load last if they absolutely do not need to load before the rest of the content. You can also separate code into its own file so there’s not an excessive amount of script code embedded on each page.

2. Meta Content

The meta content for your website pages contain some critical elements that you should audit to make sure they’re coded properly.

While meta content like the title and description don’t directly contribute to search rank like they used to, they still play a part in the organic traffic that comes through your site.

The meta content, which is displayed in the search results when a user performs a query, is the first conversion point for a prospective customer or user. Keywords they use, which appear in the meta content, are highlighted to help establish relevancy and assist the user in choosing the right results.

Aside from the content of the title and description on each of your pages, you also want to review other items like the use of canonical URL tags. Check your meta elements to make sure they’re properly structured, optimized, and not overly duplicated.

It’s easy to confuse the search crawlers if your site includes duplicated meta content, or elements that aren’t correctly formatted.

3. Hidden or Manipulated Content

If you’re the only one working on your website, you likely don’t need to worry too much about hidden content or CSS manipulation. Still, there’s always the possibility of this happening with less reputable plugins or an agency/contract specialist trying to cut corners to game the search results.

Sometimes content can be mistakenly hidden, but hidden content or CSS manipulation are often intentional. This can include tactics like:

- Masked DIVs hiding content

- White content on a white background or invisible text

- Content moved outside of the viewable area beyond the borders of the page

You won’t spot these on the front end, but these items have nowhere to hide in the source code. If you do discover them, get it cleared up before it’s spotted by Google’s algorithm. Another route to check for this outside of the source code is to review your site with CSS and JavaScript off, or to view your site as a Googlebot where you see only the raw content.

4. Verify Analytics Snippets

With all the 3rd party analytics platforms, Google, Facebook tracking codes and other types of tracking snippets, you want to verify that everything is coded properly to ensure you’re collecting accurate data. Any improper tags could seriously damage the data for a campaign you’re trying to watch closely.

Likewise, given that no website is 100% secure, it’s a good idea to monitor your site for rogue tracking snippets, or tracking code that you didn’t authorize or implement.

These aren’t always malicious, they may have been from a contractor or dev that previously worked with you on your site. All the same, if you don’t have complete control over who sees the data, then you don’t want those tracking codes on your site.

You’re less likely to have errors with these codes if you use a CMS with a dashboard that generates the code for you based on an account or unique ID, like a Google Analytics plugin for WordPress. This is more of a concern if you’re manually inserting the tracking scripts into the source code of your website.

5. Link Structure and Broken Links

Link structure, especially internal linking, is a big part of passing link juice through your site so you want to make sure that your links are properly structured, functional and coded properly.

A single misplaced character can shut down a link, which can inhibit indexing and ranking of pages down the line depending on how you’ve structured your links.

A typo with canonical links can create a mess with your optimization if a link is returned as bad.

Tools are available to scour your site for broken links, but it’s a good idea to back this up with a manual review. I’ve found errors in my own links on more than one occasion. If that mistake is within a canonical URL you can completely dismantle your SEO efforts!

Moz has a guide on rel=canonical that I highly recommend reviewing to avoid errors.

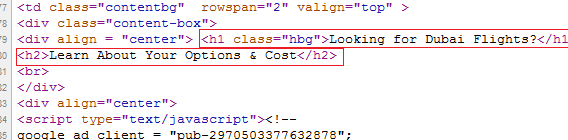

6. Check for appropriate H tags

Your subheadings should be used a logical way that appropriately breaks up content, making it easy for the reader to digest.

Those headings and subheadings should also be written and optimized to entice the reader, keep them engaged, and provided the gist of what they’re about to read. Best practices for your H tags include:

- Use the H1 tag to communicate the site or page’s main purpose while utilizing high-level keywords

- Follow with H2 and H3 tags, optimizing with secondary keywords to support your high-level keywords

Review your headers within the source to make sure they’re formatted appropriately, and use appropriate closing tags.

7. Optimizing Images

An audit of your source code should also include a quick review of any images that you’ve placed in and around your content. This is a quick check to verify that you’ve included title and alt tags for each image to optimize them appropriately.

Google puts a great deal of value on alt and title tags to help determine the context of surrounding content.

I don’t recommend trying to stuff keywords into these tags, but tag them appropriately to help establish relevancy for your content and provide a boost to your SEO targeting.

Just about every CMS will provide you with fields to alter the Title and Alt tags of image when in upload or embed them, as well as edit those later. You can quickly check these tags by viewing the source for individual pages.

8. Use the Meta Robots Content Properly

This is an important one to monitor, and it’s something that I find a lot of marketers ignore when they’re managing their own sites.

The meta robots tag is used to instruct search crawlers (robots) whether or not to index specific pages, as well as whether or not to follow links within that content.

Give the wrong instructions, and you’re basically nuking your search optimization by blocking a lot of your content from being crawled and indexed.

Choose the instructions carefully if you opt to include meta robot tags in your source. If you have these tags already, make sure you don’t have content being blocked that you otherwise want indexed.

Conclusion

You don’t need to be a skilled web developer to pick through the source code of your website for an SEO audit.

Know the elements to watch for and use search and find functions to speed along the source code review. This way you can find and quickly fix any errors that might be inhibiting your organic optimization efforts.